Fix Hidden Crawl Budget Waste for Large Sites: Expert Tips

Ignoring your crawl efficiency is like pouring expensive champagne into a glass with a hole in the bottom. You can keep buying the best bottles. Most of the value still ends up on the floor. It’s a tragedy of wasted resources. How to fix hidden crawl budget waste for large sites is the only way to ensure Googlebot actually sees your most profitable pages. If your site has over 100,000 URLs, you simply can’t afford to let bots wander aimlessly through digital dead ends. You need a map. And you need a gatekeeper.

Search engines have limits. They won’t spend forever trying to parse your messy site architecture. When a bot hits a wall of duplicate content or infinite redirect loops, it leaves. It’s that simple. But you can regain control. By identifying where the leaks are, you turn a chaotic crawl into a streamlined indexing machine. Let’s get to work.

Why is Googlebot ignoring your most important pages?

Your crawl budget is finite. It’s the maximum number of pages search engines will crawl in a given timeframe. When your site is massive, bots often get stuck in low-value URL clusters before hitting your fresh content. It’s frustrating. You publish a phenomenal guide or a new product line, yet it sits unindexed for weeks.

This happens because your host load capacity is reaching its limit. Google protects your server from crashing by slowing down its crawl rate. But if it spends that limited time on junk, your ranking potential dies. And your revenue follows. You must prioritize. Every request should count.

Start by checking your Crawl Stats report in Google Search Console. Look for high percentages of Not Found (404) or Moved Permanently (301) status codes. These are red flags. They prove you’re paying a tax on errors. Clean them up now.

How can you identify crawl waste in faceted navigation?

Faceted navigation is a nightmare. It creates millions of unique URLs based on every possible filter combination you offer. A user might select blue, size medium, and under fifty dollars. That’s one URL. Switch the order, and it’s a new one. To a bot, this looks like infinite work.

You need to block these combinations. Use your robots.txt file to prevent bots from crawling unnecessary filter paths. But be careful. If you block them after they’re already indexed, you might create a zombie page problem. Use canonical tags as a secondary layer of protection.

And consider using AJAX for filters. This keeps the user experience fast without generating new URLs for every click. It’s cleaner. It’s smarter. Googlebot will thank you by spending its time elsewhere. Focus on the core categories.

Are redirect chains killing your site speed and crawl efficiency?

Redirects are sneaky. A single jump from page A to page B is fine. But when page A goes to B, then B goes to C, and C finally lands on D, you’ve created a chain. Each hop costs time. It drains the bot’s energy. Eventually, it gives up.

These chains often accumulate during site migrations or platform updates. They’re hidden crawl budget waste that most SEOs miss. You should audit your internal links to ensure they point directly to the final destination. Don’t make the bot work twice. Keep it simple.

Use tools like Screaming Frog to find these sequences. Map out the start and end points clearly. Then, update your database to bypass the middle steps. It’s a manual process. But the performance gains are massive. Your indexation rate will climb.

Which URL parameters are draining your resources?

Tracking parameters are useful for marketing. They’re terrible for technical SEO. If every newsletter link adds a unique string to the URL, Google sees thousands of versions of the same page. This is a classic case of duplicate content. It’s also a major drain.

Go to the URL Parameters tool in Search Console. Tell Google which parameters are active and which are just for tracking. But don’t rely on this alone. Use self-referencing canonicals to signal the preferred version of every page. This helps consolidate link equity.

And try to use fragments instead of parameters for non-essential data. Search engines generally ignore everything after the hash symbol. This keeps your clean URLs in the index. It protects your crawl budget. Your site becomes leaner.

Do you have a plan for managing internal 404 errors?

A 404 error is a dead end. When a bot hits one, it stops. If your site has thousands of broken links, you’re effectively telling Google to go away. It’s the most common way how to fix hidden crawl budget waste for large sites effectively. You must heal the wounds.

Fixing these improves the user journey. It also preserves the link juice flowing through your site. If the page is gone forever, use a 410 status code. This tells the bot the page is gone and won’t return. It’s more definitive than a 404.

For pages with existing traffic, redirect them to the most relevant equivalent. Don’t just dump everything onto the homepage. That’s a soft 404. Google hates those. They’re confusing. They’re wasteful. Be precise with your mapping.

Is your XML sitemap working for or against you?

Your sitemap is a priority list. If it’s full of junk, you’re giving the bot a bad itinerary. High-quality sitemaps should only include indexable URLs with 200 OK status codes. Remove redirects, 404s, and non-canonical pages immediately.

Large sites often need multiple sitemaps. Break them down by category or date. This makes it easier to spot which sections are struggling with indexation. If a sitemap for your blog has 5,000 URLs but only 200 are indexed, you have a problem. You can find the fire faster.

And keep them updated. A stale sitemap is a useless sitemap. Automate the process so new content appears instantly. But ensure your removal logic is just as fast. It keeps the bot focused. It keeps your site relevant.

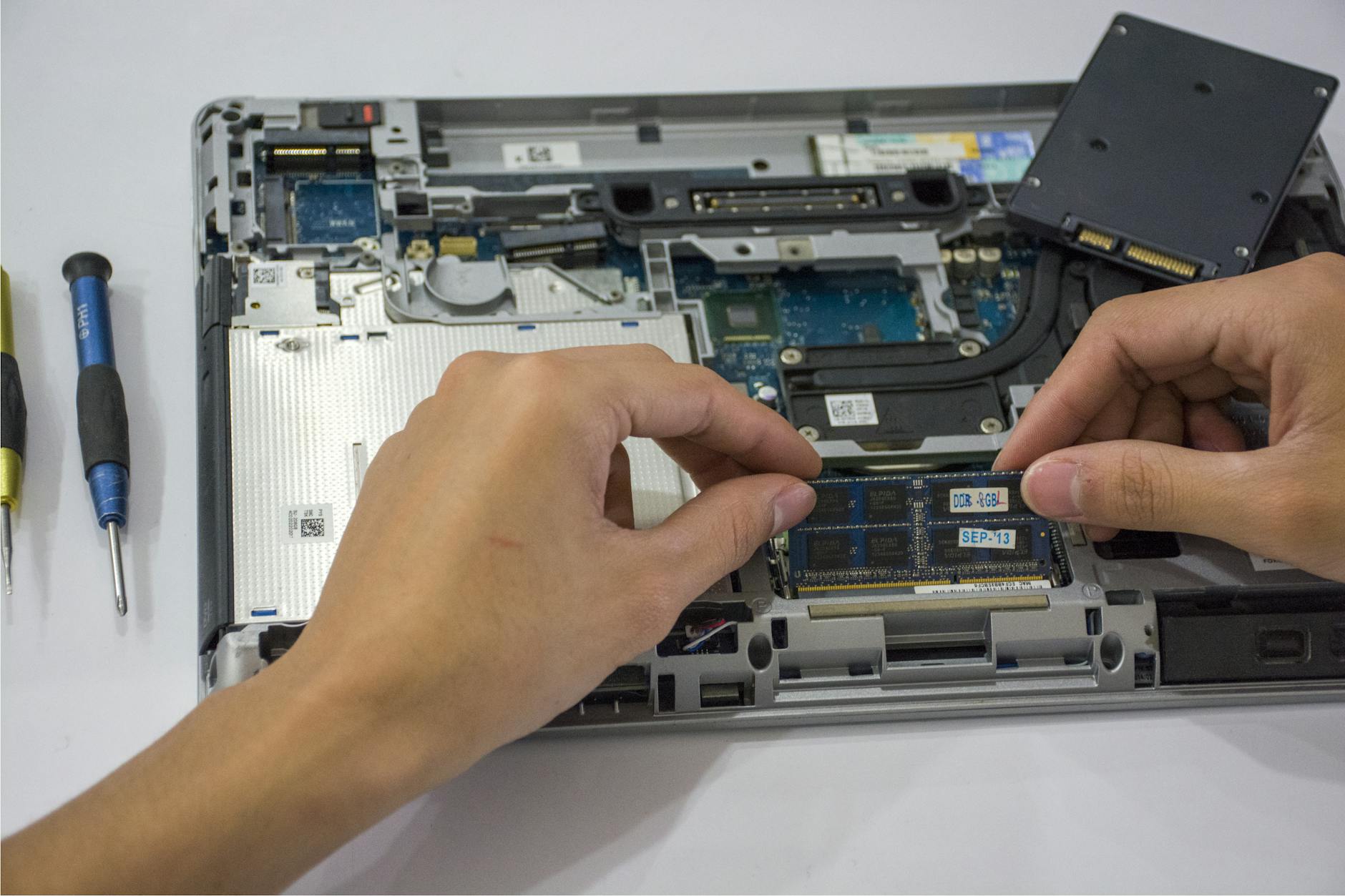

How does server response time affect your crawl limit?

Fast sites get crawled more. It’s a simple relationship. If your server takes two seconds to respond, Googlebot will limit its visits. It doesn’t want to slow down your site for real users. Speed is a prerequisite for crawl budget optimization.

Check your Time to First Byte (TTFB). If it’s high, your hosting or your code is the bottleneck. Use caching layers to serve content faster. Compress your images and minify your scripts. Every millisecond you save is a potential new page crawled.

And monitor your logs. Log file analysis shows exactly what the bots are doing. It’s the truth. You’ll see if they’re hitting the same script over and over. You’ll see if they’re getting stuck on heavy assets. Fix the speed, and the volume will follow.

Audit your site for crawl efficiency today

Stop letting vanity metrics distract you from technical health. Large sites live and die by their indexation efficiency. If you don’t manage your crawl budget, the search engines will manage it for you. And you won’t like their choices. They’ll cut corners. They’ll miss your latest updates.

Take these steps to reclaim your visibility. Start with a deep audit. Identify the loops, the chains, and the dead ends. Clean your sitemaps. Block the filters. Once you remove the friction, Googlebot will glide through your site. You’ll see faster indexing. You’ll see better rankings. And you’ll finally stop wasting your most precious digital resource.

Frequently Asked Questions

- How often should I audit my crawl budget? You should perform a deep dive at least once a quarter. However, check your Google Search Console Crawl Stats weekly for sudden spikes or errors.

- Does every site need to worry about crawl budget? No. If your site has fewer than 10,000 pages, Google will likely crawl everything worth seeing. This is a strategy specifically for enterprise SEO and large e-commerce platforms.

- Can I use Noindex to save crawl budget? Not effectively. A noindex tag still requires the bot to crawl the page to see the tag. To save budget, you must block the crawl entirely via robots.txt.

- Will improving my site speed really increase my crawl rate? Yes. Google has confirmed that faster server response times allow their bots to request more URLs without taxing your system. It’s a direct coralation.