-

AI SEO vs Manual Audit Checklist: Top Comparison 2026

Your website is leaking revenue every second it stays unoptimized. It’s a silent drain on your marketing budget. When you weigh an AI SEO audit vs manual technical audit checklist, you are choosing between raw speed and surgical precision. Modern search landscapes demand both to survive. You need to know if your technology is helping or hurting your visibility right now. It’s a high-stakes decision for any growing brand.

Why should you consider an AI SEO audit vs manual technical audit checklist today?

Speed defines the current market. Algorithms change hourly. An AI SEO audit vs manual technical audit checklist debate usually starts with how much time you actually have to spare. Automated tools can crawl millions of pages in minutes. They find broken links instantly. They flag duplicate content across massive domains without breaking a sweat. It’s impressive tech. But machines often lack the nuance of human intent. They see a code error where a human sees a strategic choice. You need to balance these two worlds.

Manual audits offer depth that software cannot replicate. A human expert looks at your brand voice. They understand how a specific site architecture serves your unique sales funnel. Machines follow scripts while humans follow logic. Both methods uncover critical issues. Utilizing a manual technical audit checklist ensures you don’t miss the subtle details that frustrate actual users. AI tools are great for catching the obvious stuff fast. Humans are better at fixing the complex puzzles that stop conversions.

Integrating both methods creates a superior strategy. Use AI for the heavy lifting. Deploy humans for the creative problem-solving. This hybrid approach is the gold standard in 2026. It saves money while increasing accuracy. You don’t have to choose just one path. You should use AI to find the needle and a human to decide if the needle is worth moving.

What are the core components of a modern manual technical audit checklist?

Every audit starts with crawlability. You must ensure bots can reach your content safely. If Google can’t see your pages, they don’t exist. Check your robots.txt file first. It’s the gatekeeper of your domain. Look for accidental disallow rules. These small typos can kill your entire indexation strategy. It happens more often than you think. Tighten your internal linking structure next. Links are the roads search engines travel.

Indexing is your second priority. Verify which pages are actually live in search results. Use Search Console to hunt down 404 errors. These are dead ends for your customers. Nobody likes a broken link. Fix them with 301 redirects immediately. A comprehensive manual technical audit checklist always includes a deep dive into sitemaps. Ensure your XML sitemap is clean and current. It should only contain the pages you want to rank. Don’t confuse the bots with clutter.

Site speed is non-negotiable now. Users will leave if your site takes three seconds to load. Check your Core Web Vitals manually. Use tools like PageSpeed Insights for data but interpret it with a human eye. Consider how images impact the mobile experience. Mobile-first indexing is the only reality we live in. Your site must be fast and responsive. If it fails on a phone, it fails everywhere. Focus on these pillars to build a strong foundation.

- Robots.txt verification: Ensure no critical folders are blocked from search engines.

- Sitemap health: Remove non-canonical or redirected URLs from your XML files.

- Internal link integrity: Fix all broken links and eliminate orphan pages.

- Canonicalization: Prevent duplicate content issues by setting preferred versions of your pages.

- Site security: Confirm HTTPS is active and all certificates are valid.

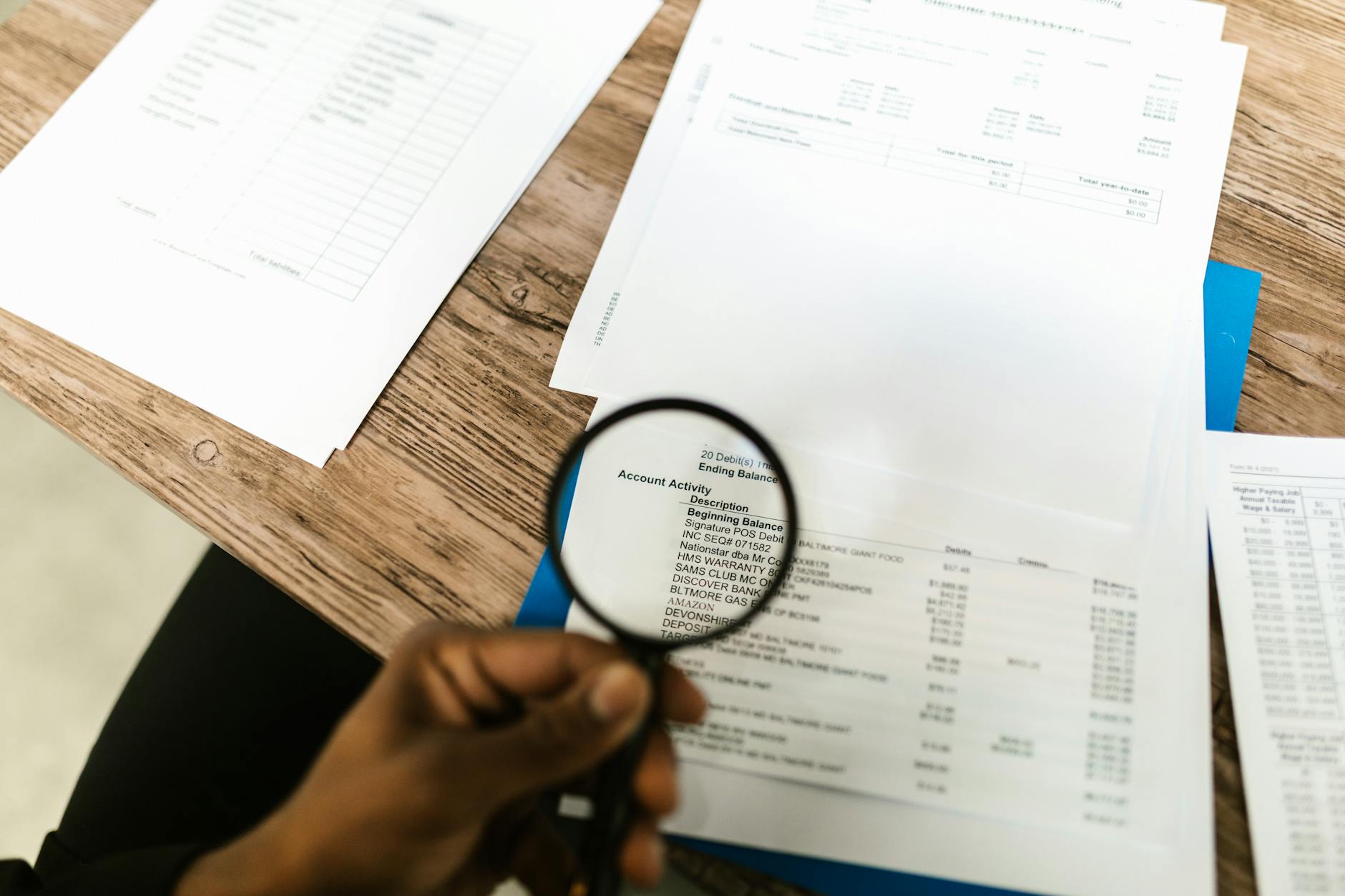

Photo by RDNE Stock project on Pexels How does an AI SEO audit differ from traditional methods in 2026?

AI doesn’t just scan. It predicts. Modern AI tools use machine learning to forecast how specific technical changes will impact your rankings. This is a massive shift from reactive auditing. When evaluating an AI SEO audit vs manual technical audit checklist, consider the predictive power. AI can analyze historical data to tell you which errors are actually costing you traffic. It prioritizes your to-do list based on potential ROI. You spend less time guessing and more time fixing.

Automation handles scale effortlessly. Managing a site with 100,000 pages manually is impossible. AI treats massive data sets like a quick snack. It identifies patterns in server logs that humans might miss. It finds recurring issues in your JavaScript execution. This level of technical depth used to take weeks. Now it takes hours. But remember that AI can produce false positives. It might flag a intentional design choice as a technical error. Always verify the output.

The cost structure is changing too. AI tools require a subscription but reduce the billable hours for consultants. However, you still need a skilled operator. Someone must prompt the AI correctly. They must also implement the findings. Without a human at the helm, an AI audit is just a long list of complaints. The real value comes from turn-key insights. Use tech to find the fire. Use people to put it out.

Can AI tools reliably replace a manual technical audit checklist?

The short answer is no. AI is a powerful assistant but a poor leader. It lacks the context of your specific business goals. A manual technical audit checklist considers your competition and your unique value proposition. AI sees code but humans see customers. A tool might tell you to add more text to a page for SEO. A human knows that more text will ruin your conversion rate. Context is everything in high-end search strategy.

Human auditors find the ghost in the machine. They spot UX issues that don’t show up in a standard scan. For example, a pop-up might pass a technical crawl but annoy every single visitor. AI won’t always tell you that your layout is confusing. It won’t realize that your call-to-action button is the same color as the background. These are human problems. They require human solutions. You need the eyes of a specialist to catch these nuances.

Balance is the only way forward. Start with an AI scan to clear the low-hanging fruit. Let it find the broken images and the missing meta tags. Once the easy stuff is done, bring in the experts. They will look at the sophisticated problems. They will analyze your entity relationship data and your knowledge graph presence. This ensures your site is technically sound and strategically dominant. Don’t settle for half the picture.

Successful SEO in 2026 requires a symbiotic relationship between automated data processing and human strategic oversight. One provides the map, the other drives the car.

Which technical issues are best discovered via an AI SEO audit?

Pattern recognition is where AI shines. It can spot issues across thousands of URLs that look fine individually. Let’s say your site has a subtle pagination error that only affects every tenth page. A human would never find that. An AI will flag it in seconds. It excels at identifying mass duplicate content issues. It also tracks server response times over long periods. This reveals intermittent performance spikes that a single manual check would miss.

Natural Language Processing is another advantage. AI can audit your content for semantic density and entities. It compares your site to the top performers in your niche. It tells you exactly which topics you are missing. This goes beyond traditional keyword counting. AI understands concepts and relationships. It helps you build a more authoritative site. While a human can do this, the AI does it at a scale that is simply unmatched. It provides a data-backed roadmap for content expansion.

- Large-scale duplicate content: Identifying similar blocks of text across massive e-commerce catalogs.

- Intermittent server lag: Monitoring page load stability over several days or weeks.

- Semantic gaps: Finding topics your competitors cover but you have ignored.

- Schema markup errors: Validating structured data code across thousands of unique pages.

- Log file analysis: Determining exactly how often search bots visit specific sections of your site.

How do you perform a manual technical audit with high precision?

Start with your head. Open your site in a clean browser window. Look at your URL structure directly. Are the links clean and descriptive? Long, messy URLs hurt your click-through rate. Check your headings manually on key pages. Ensure you have one H1 and a logical flow of H2 and H3 tags. This hierarchy helps both users and bots. It makes your content easy to digest.

Test your forms and checkout flows. This is the most critical manual task. No AI tool can truly simulate the frustration of a buggy checkout button. Go through the process on your phone. See if the keyboard covers the input fields. Check if the Core Web Vitals shift when you click a button. These tactile experiences are the heart of SEO. Search engines reward sites that people actually enjoy using. Don’t ignore the human element.

Review your internal linking logic. Are you sending juice to your most important pages? A manual technical audit checklist should include a review of your anchor text. Diverse, descriptive anchors are better than repetitive ones. Look for keyword cannibalization issues by searching your site for specific terms. If two pages are fighting for the same spot, merge them. One strong page is always better than two weak ones. This requires a human decision on which content is superior.

What is the ideal workflow for an AI SEO audit vs manual technical audit checklist?

Efficiency starts with the right order of operations. Run your AI tools first. This creates a raw list of technical debt. Group these issues by severity. Focus on indexation blockers and 404 errors immediately. These are the fires you must put out. Once the site is stable, move to the manual phase. This is where you refine the strategy. You look at the data the AI provided and decide what actually matters.

Communication is the next step. Take the audit findings to your development team. Don’t just dump a PDF on their desk. Explain the business impact of each fix. Developers are more likely to help when they understand the goal. Use clear, non-technical language. Show them how a faster site increases revenue. This bridge between marketing and dev is essential. It’s the only way things actually get fixed.

Finally, set up continuous monitoring. The AI SEO audit vs manual technical audit checklist debate shouldn’t be a one-time event. Use AI to watch your site 24/7. It should alert you if your rankings drop or if a new crawl error appears. Schedule a deep manual review every quarter. This ensures your brand strategy is still aligned with your technical performance. It keeps you ahead of the competition without burning out your team.

Modernize your search strategy for maximum results

Your search visibility is a living asset. It needs constant care and the right tools. When you choose an AI SEO audit vs manual technical audit checklist, you’re investing in your digital future. Start by automating the boring stuff. Let the machines find the broken code and the missing tags. This frees up your brain for the hard work. Focus on your user experience and your brand authority. These are the things that actually drive sales in 2026.

Don’t wait for your traffic to drop before taking action. Conduct a comprehensive audit this week. Use the latest software to scan your domain and a manual technical audit checklist to verify the findings. This proactive approach saves thousands in lost revenue. It builds a site that search engines trust and users love. Grab your tools and start auditing. Your bottom line will thank you.

Frequently asked questions regarding technical SEO audits

Is an AI SEO audit better than a manual one?

Neither is better on its own. They serve different purposes. AI is superior for data processing and finding patterns at scale. A manual audit is essential for strategy, context, and user experience. The best results come from combining both methods into a single, cohesive workflow.

How often should I run a technical SEO audit in 2026?

You should use AI monitoring tools daily to catch sudden errors. A full, deep-dive manual technical audit checklist review should happen at least once every three months. If you make major site changes or migrate your domain, do an audit immediately to ensure nothing broke during the transition.

What is the most common technical SEO error found?

Redirect loops and 404 errors remain the most frequent issues. These are often caused by old plugins or poor site migrations. Even with advanced AI, these crawlability issues persist. Regularly cleaning your internal links is the easiest way to improve your site health.

Do I need an expert to interpret an AI audit report?

Yes. Raw AI reports often contain thousands of data points. An expert helps you prioritize these tasks based on ROI and difficulty. Without an expert, you might waste weeks fixing minor issues while missing a massive strategic flaw that is hurting your rankings.

-

Fix AI Hallucinations in Programmatic SEO Content | 2026 …

Building a massive site through automation is like hosting a dinner party where the chef is a robot that occasionally confuses salt with powdered glass. You can produce thousands of pages in minutes. The scale is impressive. But if those pages claim your software integrates with a toaster or that your CEO is a literal astronaut, the vanity of volume fades fast. Mastering how to fix AI hallucinations in programmatic SEO content is no longer just a technical chore: it’s a survival skill for high-growth publishers in 2026. You’re building a library of facts, and one parasitic lie can rot the entire foundation. It’s time to stop the bleeding.

The stakes have never been higher for automated sites. Search engines now prioritize information density and verifiable accuracy above all else. If your programmatic SEO workflow leaks false data, your traffic won’t just plateau. It’ll vanish. But you can secure your pipelines. You can turn a reckless AI model into a disciplined data clerk. This guide shows you exactly how to do it.

What causes AI hallucinations in programmatic workflows?

Models are prediction engines. They don’t know facts like humans do: they calculate the most likely next word. When your dataset has gaps, the AI fills them with plausible fiction. It’s trying to be helpful. That help is deadly. If you ask for a unique description of a 404 error but provide no context, the model makes up a creative history of the status code. It prioritizes flow over truth. You lose trust. Readers bounce. Rankings drop.

Data fragmentation is often the culprit. Most programmatic SEO setups pull from multiple APIs or flat files. If the mapping is off, the AI gets confused. It struggles to bridge the gap between raw numbers and natural language. It builds a bridge out of thin air. And that bridge collapses the moment a user reads it.

How to fix AI hallucinations in programmatic SEO content through grounding?

Grounding is your defensive shield. It forces the Large Language Model to stay within the boundaries of a provided dataset. Think of it as an open-book test. You give the AI the textbook. It’s not allowed to guess. To start fixing AI hallucinations, you must build a robust Knowledge Graph or a clean CSV that serves as the source of truth. The model only writes based on these facts.

Retrieval-Augmented Generation (RAG) is the gold standard for this. You store your verified brand and product facts in a vector database. When the content engine runs, it pulls the specific context first. It feeds that context into the prompt. The AI then synthesizes the data. It doesn’t invent. It organizes. But you must verify the source data first. Garbage in means garbage out.

And you must use explicit negative constraints. Tell the AI it cannot mention features not found in the input. Use phrases like: If the value is null, do not mention the attribute. This prevents the model from assuming a feature exists just because its competitors have it. It’s about strict control. You are the architect. The AI is the hammer.

Photo by Sergey Meshkov on Pexels Why should you use content coverage scores for quality control?

You need a way to measure lies. A content coverage score compares the generated text against your original data points. It’s a mathematical check. If the data says the price is ten dollars, but the text says twelve, the score drops. You can automate this audit. Script a secondary AI agent to cross-reference every claim. It’s a second set of eyes.

High scores mean the model followed instructions. Low scores trigger a rewrite. In 2026, many teams use Graph-RAG for precise data retrieval. This connects entities and their relationships. It ensures that if you are writing about a specific software integration, the AI knows exactly which version is compatible. It doesn’t guess. It checks the graph. It reports back.

This automated verification saves hours of human labor. You only review the failures. It makes scaling possible. Without it, you are just gambling. And the house always wins.

How does a human in the loop workflow prevent misinformation?

Algorithms have limits. Even the best models miss subtle nuance or sarcasm. You need a human touch. A human in the loop system samples your programmatic output for manual verification. Don’t check every page. That’s impossible at scale. Instead, audit a statistically significant percentage. Look for patterns. Find the logical leaps.

If you find a hallucination in page fifty, it’s likely present in page five thousand. Fix the prompt. Update the data source. Then regenerate. This feedback loop is essential for how to fix AI hallucinations in programmatic SEO content effectively. You are fine-tuning the system. You are teaching the machine.

Focus your human reviewers on high-impact pages. Check your top-performing templates. These are the faces of your brand. They deserve the most scrutiny. One wrong sentence here can tank your reputation. Be vigilant. Stay involved. Don’t walk away.

Can semantic tool selection reduce errors in automated pages?

Sometimes the model isn’t the problem. The tools are. Semantic tool selection allows your AI agent to choose the right database or API for a specific query. It’s about precision. If the AI needs a price, it goes to the pricing API. If it needs a feature list, it goes to the documentation database. It doesn’t rely on its training data.

Training data is often stale. It’s yesterday’s news. By providing real-time tools, you ensure the content is current. This is critical for programmatic SEO in fast-moving industries like finance or tech. You wouldn’t trust a 2024 model to give you 2026 interest rates. You shouldn’t trust it to describe your latest product update either.

Connect your CMS to live data feeds. Let the AI query them. This creates a real-time content engine. It is accurate. It is fresh. It is useful. It solves the hallucination problem by removing the need for memory. The facts are right there. The machine just translates them.

What prompt engineering techniques stop logical leaps?

Vague prompts invite fantasy. If you ask for a strategic summary, you get fluff. If you ask for a summary using only properties A, B, and C, you get accuracy. Be specific. Use structured output formats like JSON or Markdown tables inside your prompt instructions. This forces the model to think in categories. It limits the room for creative writing.

Try the Chain of Thought technique. Ask the model to explain its reasoning before it writes the final copy. This slows it down. You can catch a hallucination in the reasoning step. If the logic is flawed, the output will be too. You can even set up a critic prompt. One model writes: another model checks for errors. This adversarial setup is powerful. It’s self-correcting.

And never forget to define the persona. A technical documentation specialist is less likely to hallucinate than a creative blogger. Set the tone. Set the boundaries. Watch the quality rise. It’s worth the extra effort.

Secure your brand narrative through rigorous data validation

You are building an asset. Treat it like one. Programmatic SEO is a tool for reach, but it shouldn’t be a tool for noise. By implementing grounding, RAG, and strict human oversight, you eliminate the risk of the machine going rogue. Start by auditing your current pages. Identify the hallucinations. Trace them back to the prompt or the data source. Fix the root cause. This isn’t a one-time task. It’s a continuous process of refinement.

As AI models evolve in 2026, they become more convincing. This makes their lies harder to spot. You must stay ahead of the curve. Build your Knowledge Graph today. Standardize your data entry. Use these hallucination recovery strategies to clean up your existing content. Your traffic depends on it. Your brand depends on it. Get to work.

Common questions about AI accuracy in SEO

-

Can I use AI to check if another AI is hallucinating?

Yes. This is called a critic-bot workflow. You provide the second AI with the source data and the generated text. It flags discrepancies. It’s a standard practice for high-volume programmatic SEO sites. -

Will Google penalize me for AI hallucinations?

Google penalizes unhelpful or misleading content. If your hallucinations provide false information that harms a user’s decision-making process, your rankings will suffer. Accuracy is a primary ranking factor for E-E-A-T. -

What is the best model to avoid hallucinations in 2026?

Models with large context windows and strong RAG capabilities are best. Look for models that specifically offer grounding features or integrated search tools. The model’s ability to cite its sources is a key indicator of reliability. -

How often should I audit my programmatic content?

You should run automated checks on every update. Manual audits should happen monthly. Focus on pages that show a sudden drop in engagement or ranking. These are often the first signs of a data integrity issue.

-

Can I use AI to check if another AI is hallucinating?

-

GEO Strategies for SGE 2026: Modern Search Success Tactics

Trust matters more now than ever. You’ve likely noticed your organic traffic patterns shifting as AI snapshots dominate the top of every search result page. It’s frustrating to see your hard work summarized by a machine without a clear click to your site. You need a way to ensure your brand remains the primary source that these AI models rely on. Implementing effective generative engine optimization strategies for SGE 2026 is no longer a choice for forward-thinking marketers. It’s the only way to survive.

Why is GEO critical for your brand visibility in 2026?

The search landscape has fundamentally changed. Traditional blue links are now secondary to the AI-generated overviews that provide instant answers to complex user queries. AI engines don’t just find content. They synthesize it. This means your data must be structured in a way that machines can easily ingest, cite, and present to the user.

If you don’t adapt, your content stays buried. But if you align your content with how large language models function, you become the definitive authority. And authority is the currency of the modern web. Every SEO strategy must now account for how Perplexity, ChatGPT, and Google SGE interpret information. They look for consensus. They crave verified facts.

How can you optimize content for AI-generated snapshots?

Start by focusing on directness. AI models prioritize content that answers questions with zero fluff or filler. You should treat your paragraphs like data blocks that can be lifted and placed into a summary. This approach is central to generative engine optimization strategies for SGE 2026 because it reduces the friction for the AI crawler. Use clear, declarative sentences.

Structure your technical data. Use Schema markup to define every entity on your page, from product prices to expert author bios. But don’t stop at the code. Ensure your text uses clear headers that mirror the way people actually speak to their AI assistants. When a user asks a long-tail question, your paragraph should be the perfect answer.

Photo by ThisIsEngineering on Pexels What role does factual density play in AI rankings?

Data shows that LLMs prefer highly dense information. They look for specific statistics, named entities, and unique insights that haven’t been regurgitated across a thousand other blogs. To win here, you must include original research. Cite your sources clearly. Use numbers.

Content with high factual density earns more citations. It’s not about word count. It’s about the amount of useful information per paragraph. If your article is thin, the generative engine will simply ignore it in favor of a competitor who provides more depth. You must become the most reliable data point in your niche.

How do you build brand authority that AI models trust?

Trust is built off-site. AI models are trained on massive datasets that include social media, news outlets, and academic journals. This means your GEO strategy must extend beyond your own domain. Get mentioned in reputable industry publications. Engage in high-level discussions on platforms where experts gather.

The more often your brand is mentioned alongside specific keywords, the more the AI associates you with that topic. It’s a digital reputation game. But it’s also about consistency. If your site says one thing and your social channels say another, the AI sees friction. Stay consistent. Be everywhere.

Can you use structured data to influence generative results?

Yes, and it’s your most powerful weapon. While traditional SEO used Schema for rich snippets, generative engine optimization strategies for SGE 2026 use it to build a knowledge graph. This tells the AI exactly how your products relate to specific problems. It maps your expertise.

Implement Organization and Person schema to link your content to real identities. AI engines are programmed to avoid hallucination, so they look for verified identities to back up claims. When you provide this data in a machine-readable format, you make it easy for the engine to trust you. It’s like giving the AI a map to your brain.

Which content formats perform best in the current search era?

The winners are those who use hybrid formats. You need a mix of long-form analytical pieces and quick-hit summary blocks. Bulleted lists are perfect for Google SGE to pull into its multi-step instructions. Tables are excellent for comparison queries.

And don’t forget video transcripts. Search engines now index the text within your videos to answer spoken queries. Ensure your video descriptions are keyword-rich but natural. But most importantly, keep your mobile experience flawless. If the page loads slowly, the AI might crawl it, but the user will never see it.

How do search intent and conversational keywords change the game?

Users no longer type running shoes into a bar. They say find me the best carbon-plated running shoes for a marathon under two hundred dollars. Your content must mirror this conversational tone perfectly. Use natural language. Avoid jargon.

Focus on the why and how. Generative engines are built to solve problems, not just provide links. If your content guides the user through a decision-making process, you’ll see higher engagement. This is the heart of SGE optimization. You’re helping the machine help the human.

Do you need to rethink your keyword research for 2026?

Old-school keyword volume is a dying metric. Instead, look for entity relationships and question-based clusters. You want to rank for the intent, not just the string of letters. Use tools that show you the follow-up questions users ask after their initial search.

Map out the entire journey. A user might start with a broad question and narrow it down through three or four AI prompts. You want to be the answer to every single one of those prompts. This creates a loop of visibility. It makes your brand synonymous with the solution.

Future Proof Your Visibility through Strategic GEO Implementation

The shift toward AI-led discovery is permanent and accelerating. You must stop writing for old algorithms and start writing for intelligent systems that value clarity, authority, and factual precision. By adopting generative engine optimization strategies for SGE 2026, you ensure that your brand isn’t just a footnote in a summary but the primary source driving the entire conversation.

Invest in your data structure today. Audit your existing content for factual density and conversational relevance. But most importantly, keep your focus on the human on the other side of the screen. If you provide genuine value, the AI will find you. Start optimizing now.

Frequently Asked Questions About SGE in 2026

What is the main difference between SEO and GEO?

SEO focuses on ranking websites in a list of results based on keywords and links. GEO focuses on making content extractable and authoritative so AI models include your brand in their generated summaries and answers.How often should I update my content for generative engines?

AI models value freshness and accuracy. You should review your high-traffic pages at least once a quarter to ensure all statistics, links, and claims remain accurate and reflect current industry standards.Does word length still matter for ranking in SGE?

Word count is secondary to information density. A 500-word article packed with unique data and direct answers will often outperform a 3000-word article filled with fluff and repetitive language.Will traditional backlinks still help my GEO efforts?

Yes, but their role has evolved. Backlinks now serve as a signal of authority and trust to the AI. A link from a high-authority site tells the generative engine that your information is reliable and should be cited. -

AI SEO Agents for Internal Linking: Boost Rankings in 2026

Ignoring your site architecture is a slow death for your rankings. Every orphan page on your domain acts as a leak where potential organic traffic simply drains away before it ever reaches your bottom line. You can’t fix this manually anymore. It’s too slow. It’s too prone to human error. Smart teams are now deploying AI SEO agents for automated internal linking to maintain a perfect web of connectivity that bots and users both love. They scale. They win.

Internal links are the central nervous system of your website content. They tell Google which pages matter most. If your linking strategy is broken, your highest-value conversion pages stay buried. Using AI SEO agents for automated internal linking ensures that every new article you publish is instantly woven into your existing authority. It’s about relevance. It’s about speed. And it’s how you dominate in 2026.

Why should you use AI agents to manage your site architecture?

Manual linking is tedious. You have to hunt for old posts to find anchor text. It takes hours. But AI SEO agents for automated internal linking handle this task in seconds. These agents scan your entire database to find semantic relationships you might miss. They see patterns. They suggest links based on real data.

Modern search engines prioritize depth and topical authority. If a page exists in a vacuum, it won’t rank for competitive terms. AI agents prevent this by creating a logical flow of PageRank across your domain. Don’t waste time. Let the machines build the map. But keep your eyes on the results.

You need a system that learns as your site grows. Large domains with thousands of URLs are impossible to manage by hand. An automated agent doesn’t get tired or skip a page. It provides a level of consistency that a human editor can’t match. Accuracy is everything. Efficiency is the prize.

How do AI SEO agents for automated internal linking identify topical clusters?

Context is the priority. AI agents use natural language processing to understand the core theme of every paragraph. Instead of just searching for exact keyword matches, they look for semantic relevance. This builds a stronger cluster. It keeps users on the page.

The agent creates a topical map of your site. It identifies your pillar pages and ensures all supporting content points back to them. This creates a clear hierarchy. Google’s crawlers follow these paths. They index your content faster. And they understand your site’s purpose better.

Think about your user’s journey. When an agent places a link, it’s looking for the next logical step for the reader. If someone is reading about SEO, they probably want to know about backlinks. The agent finds that connection. It places the link naturally. You’ll see your bounce rates drop almost immediately.

Photo by Pavel Danilyuk on Pexels Can automation truly improve your search engine rankings and crawl budget?

Crawl budget is finite. If Googlebot gets lost in a maze of dead ends, your new content stays hidden. AI SEO agents for automated internal linking optimize the path for these bots. They reduce the click depth. They highlight new content. It helps bots find your best work.

Links are signals of importance. A page with hundreds of internal links is seen as a priority. By automating this, you ensure your money pages get the most juice. The agent balances the distribution. It prevents any single page from hogging all the equity. This lift is visible.

Rankings follow structure. A disorganized site confuses the algorithm. When you use AI SEO agents for automated internal linking, you provide the clarity the algorithm craves. Your keywords start to climb. Your traffic becomes more stable. It’s a compounding effect. More links lead to more views.

- Automated discovery: Finds every orphan page and links it to a relevant parent.

- Contextual anchor text: Swaps generic click here links for keyword-rich descriptions.

- Broken link repair: Automatically updates links when you change a URL structure.

What are the best tools for agentic internal link optimization in 2026?

The market has shifted. In 2026, we’ve moved past simple plugins to true agentic workflows. Tools like AIOSEO Link Assistant and Surfer AI are now standard for many teams. They offer real-time suggestions. They integrate directly with your CMS. It’s a smooth process.

Newer players like Similar.ai and Quattr have introduced autonomous linking agents. These tools don’t just suggest. They execute. You set the rules, and the agent modifies the HTML on the fly. It’s powerful. It requires trust. But the time savings are massive.

Look for tools that offer data-driven insights. You want an agent that looks at Search Console data to see which pages need more help. If a page is sititng on page two, the agent should prioritize it for new links. This isn’t just automation. It’s strategy. Choose wisely.

How do you keep internal links natural when using AI agents?

Over-optimization is a risk. If an agent stuffs too many links into one paragraph, it looks like spam. You must set threshold limits for your agents. Only permit two or three links per section. Keep it clean. Keep it readable.

The anchor text must vary. Using the same keyword for every link to a page is a red flag. Sophisticated AI SEO agents for automated internal linking use synonyms and long-tail phrases. They mimic human writing patterns. They avoid the footprint of a bot. Variety creates a natural profile.

Always review the first few hundred links. Most agents allow for an approval queue. Look at the placements. Are they helpful? Do they feel forced? Once you’re confident in the agent’s logic, you can move to full autonomy. Start slow. Build trust. Then scale up.

Which metrics should you track to measure the success of AI linking?

Watch your click-through rate. If users are clicking the links the agent provides, the relevance is high. If they aren’t, the agent’s logic needs a tweak. Use heatmaps to see where users hover. Adjust your settings. Optimize the flow.

Check your average crawl frequency. Does Googlebot visit your deeper pages more often now? You can see this in your server logs. A successful AI SEO agents for automated internal linking setup will show increased bot activity across the whole domain. It means the map is working. The bots are exploring.

Monitor your page authority scores. Tools like Ahrefs or Semrush can show you how internal juice is flowing. You want to see a rise in the authority of your target pages. If the numbers move up, your automation is paying off. Results don’t lie. Data drives the next move.

Three core metrics for link health

- Internal Link Density: The average number of links per 1,000 words.

- Link Equity Distribution: How evenly PageRank is spread across the site.

- Orphan Page Count: The number of pages with zero incoming internal links.

What are the biggest mistakes to avoid with automated link agents?

Don’t link for the sake of linking. If the connection isn’t valuable to the reader, don’t include it. AI SEO agents for automated internal linking can sometimes be too aggressive. They prioritize the algorithm over the person. This is a mistake. Always put the user first.

Avoid recursive link loops. This happens when Page A links to Page B, which links back to Page A, infinitely. A bad agent might create these clusters by accident. It wastes crawl budget. It confuses search engines. Set rules to prevent this. Audit your links regularly.

Never ignore your outbound link profile. While internal links are vital, you still need to point to external authority sources. Some agents focus so much on your own site that they ignore the wider web. This makes your content look insular. Balance is essential. Link out too.

Modernize your site structure with autonomous agents

The days of manual link spreadsheets are over. You can’t keep up with the volume of content required to rank today if you’re still clicking through every old post. Deploying AI SEO agents for automated internal linking is no longer an optional luxury. It’s a prerequisite for staying competitive in a saturated market.

Start by auditing your current structure. Identify your orphan pages. Select an agent-based tool that fits your current tech stack. Run a pilot program on one sub-folder of your site. Small wins lead to big changes. You’ll see the impact in your search visibility within weeks.

Take control of your data. Don’t let your content sit in silos. Link it. Boost it. Let the AI handle the heavy lifting while you focus on high-level strategy and creative production. Your rankings depend on it. Your future self will thank you. Get started now.

Frequently Asked Questions

How many internal links are too many for one page?

Most experts suggest keeping it between 3 and 5 links per 1,000 words of content. However, the limit is more about user experience than a hard number. If the links feel helpful and don’t clutter the reading experience, you’re safe. AI agents can be programmed to respect these specific density limits automatically.

Will AI-generated links get my site penalized by Google?

No, there’s no penalty for using AI to manage your internal structure as long as the links are contextually relevant. Google cares about the quality and utility of the link for the user. If your AI SEO agents for automated internal linking are creating a better experience for people, the search engine will reward you with better rankings.

Do I need technical skills to set up an SEO agent?

Most modern platforms are designed for marketers, not developers. Many tools offer a no-code interface where you simply connect your CMS and set your preferences. While a basic understanding of SEO strategy helps, you don’t need to write code to get an agent running on your site today.

-

Best AI Workflow for High-Volume Content: Maximize Output

p>Your brand’s ability to dominate search rankings depends on moving faster than the competition without sacrificing the human depth that readers actually crave. You’re likely drowning in half-baked drafts or staring at a blinking cursor while trying to scale a content machine that just won’t click into gear. Content debt is real. And it’s killing your growth. Modern teams now use a best ai workflow for high volume long-form content production to transform raw ideas into ranking assets in minutes instead of days. They see results. They stay lean. They win the attention war.

The secret lies in the handoff between machine speed and human strategy. AI doesn’t replace the expert, it simply removes the friction of the mundane. When you treat your LLM as a junior researcher rather than a magic button, everything changes. Your output triples. Your costs plummet. The quality stays high.

Why is a structured AI process necessary for scaling content?

Speed is just one part of the equation. You need a best ai workflow for high volume long-form content production to maintain a consistent brand voice across thousands of pages. Manual drafting relies on individual whims. Systems rely on logic. Logic is what scales.

Chaos creates waste. Without a clear path from keyword to published post, your team will waste hours on repetitive prompting and fixing hallucinated facts. A best ai workflow for high volume long-form content production builds guardrails that prevent your content from sounding like every other generic bot on the web. It ensures every piece serves a specific business goal. But you have to build that skeleton first.

Smart companies are moving toward multi-agent systems. Different AI agents handle specific tasks like SERP analysis, drafting, and fact-checking. This specialization mimics a real newsroom. It works. It’s efficient. It’s the future of enterprise publishing.

How do you select the right tech stack for large scale writing?

Tool fatigue is a productivity killer. You don’t need every new shiny app, you just need a stack that talks to itself through clean integrations. Look for platforms that offer bulk processing capabilities and API access. If you can’t automate the flow, you’ll hit a ceiling quickly. You need an engine, not a tricycle.

Start with a core content intelligence tool like Frase or Surfer SEO. These provide the data-driven blueprints your AI needs to follow to actually rank. Combine this with a powerful LLM like Claude 3.5 Sonnet or GPT-4o for the actual prose. Both offer distinct tonal advantages depending on your niche. Claude tends to sound more human. GPT-4o excels at logical formatting.

Connect everything with an automation bridge. Make.com or Zapier can move your generated text from the AI to a Google Doc and then into WordPress or Webflow. This eliminates the copying and pasting that drains your team’s energy. Frictionless movement is high volume long-form content production at its finest. It saves hours. It’s essential.

Recommended Content Tools for 2026

- Claude 3.5 Sonnet: Best for high-quality, nuanced prose that avoids common AI tropes.

- Make.com: Critical for connecting your research tools to your CMS for hands-off publishing.

- Jasper: Built specifically for marketing teams that need to maintain a strict brand voice at scale.

- Perplexity: Perfect for the research phase to pull real-time data and cited sources.

Photo by Google DeepMind on Pexels What does an efficient AI research phase look like?

Garbage in leads to garbage out. Your best ai workflow for high volume long-form content production must begin with deep data extraction before a single sentence is written. If the AI doesn’t know what high-ranking competitors are saying, it can’t beat them. It just guesses. Guessing is for amateurs.

Use AI agents to scrape the top ten results for your target keyword. They should identify common headings, the average word count, and the specific questions users are asking in the People Also Ask section. This data becomes your context window. It’s the fuel for the engine. And it’s non-negotiable.

Refine your research into a comprehensive brief. This brief should include the target audience, the desired action, and a list of semantic keywords to include. When the AI has a narrow lane to stay in, it performs significantly better. It stays focused. It delivers value. It sticks to the point.

How can you automate the outlining process for depth?

Outlines are the secret to flow. A weak outline results in repetitive paragraphs and logical leaps that frustrate readers and search engines alike. You want to structure your content so that it answers the next question a reader will have before they even ask it. This creates stickiness. It keeps them reading.

Feed your research data into the AI and ask it to generate a topical map for the article. Tell it to look for gaps in existing content that you can fill with unique insights. Avoid generic H2 headings that everyone else uses. Be specific. Be daring. Be helpful.

Review the outline manually. This is the first of two critical human-in-the-loop moments. Ensure the logic holds up and the headers follow a natural progression of ideas. Once the skeleton is strong, the drafting phase becomes a simple execution task. It’s fast. It’s effective. It’s ready for the next step.

What is the best way to generate long form drafts without losing quality?

Generating 2,000 words in one go usually results in a mess. To maintain the best ai workflow for high volume long-form content production, you should generate your content section by section. This allows the AI to stay within its token limits and maintain a high level of detail. It prevents fluff. It preserves quality.

Use a specific prompt for each section that references the overall brief. Give the AI style guidelines that forbid the use of banned words or repetitive sentence structures. Tell it to use contractions and a direct tone. This makes the draft feel less like a machine wrote it and more like an expert spoke it. It’s better for the reader. It’s better for the brand.

But don’t stop at the first draft. Run the text through a readability checker to ensure it hasn’t drifted into academic jargon. AI loves to sound smart at the expense of clarity. Your job is to pull it back to reality. Keep it punchy. Keep it simple. Keep it useful.

How do you implement a human review layer at scale?

Automation is not abdication. A best ai workflow for high volume long-form content production must include a stage where a human editor adds the Information Gain that AI cannot fake. Personal anecdotes, unique case studies, and contrarian opinions are what make content shareable. AI cannot experience things. You can.

Assign your editors to focus on the first 20 percent of every piece. The hook and the first few paragraphs determine if a reader stays or bounces. If those are perfect, the rest of the AI-generated content can do the heavy lifting. This allows one editor to manage five times the usual volume. They edit. They don’t write. They’re strategists.

Check for factual accuracy and link integrity. Even the best models in 2026 can occasionally hallucinate a statistic or cite a dead link. Use an automated fact-checking tool to flag potential issues, then have your human expert verify them. This protects your site’s authority. It builds trust. It stays safe.

Can you integrate AI images and internal links automatically?

A wall of text is a bounce risk. Your best ai workflow for high volume long-form content production should include automated asset generation. Tools like Midjourney or DALL-E 3 can create custom featured images and departmental graphics based on your article headings. Consistent visuals improve the user experience. They look professional. They help branding.

Internal linking is the other half of the battle. Use a tool like LinkWhisper or a custom script to scan your new draft and suggest links to existing pages on your site. This distributes link equity and helps search engines crawl your content faster. It’s an SEO win. It’s a UX win.

Don’t forget the metadata. AI is excellent at generating catchy titles and meta descriptions that meet character counts. Set up your automation to push these directly into your CMS. This means when an editor hits publish, the page is already fully optimized. It’s ready. it’s live. It ranks.

Take Control of Your Content Volume Today

Efficiency doesn’t happen by accident. You must intentionally build a best ai workflow for high volume long-form content production that combines the processing power of modern models with the strategic oversight of your best people. Start small by automating your research and outlining. Watch the time savings accumulate. Then scale the drafting.

The brands that win in 2026 aren’t the ones with the largest writing teams. They’re the ones with the smartest systems. By removing the manual labor of content creation, you free your team to focus on the big ideas that actually move the needle for your business. Stop writing. Start building. Your audience is waiting for something worth reading.

Frequently Asked Questions

-

Will AI-generated content hurt my SEO rankings?

No, search engines like Google rank content based on its Helpfulness, Experience, Authoritative, and Trustworthiness (E-E-A-T), not its source. As long as you provide useful information that answers the searcher’s intent, AI-assisted content can rank at the top. The key is manual oversight and adding unique value. -

How many articles can one person produce with this workflow?

With a fully automated best ai workflow for high volume long-form content production, a single editor can realistically manage 15 to 25 high-quality long-form articles per week. This involves minimal drafting and focuses almost entirely on strategy, fact-checking, and final polishing. -

Which AI model is best for long-form content in 2026?

Claude 3.5 Sonnet is currently the industry favorite for long-form prose because it avoids many of the repetitive linguistic patterns found in other models. However, GPT-4o remains superior for data-heavy articles and complex technical structuring. -

Do I need coding skills to set up a content workflow?

Not necessarily. Visual automation platforms like Make.com allow you to connect different tools using a drag-and-drop interface. While some knowledge of APIs can help you customize the flow, most modern teams use no-code solutions to manage their production houses.

-

Fix Hidden Crawl Budget Waste for Large Sites: Expert Tips

Ignoring your crawl efficiency is like pouring expensive champagne into a glass with a hole in the bottom. You can keep buying the best bottles. Most of the value still ends up on the floor. It’s a tragedy of wasted resources. How to fix hidden crawl budget waste for large sites is the only way to ensure Googlebot actually sees your most profitable pages. If your site has over 100,000 URLs, you simply can’t afford to let bots wander aimlessly through digital dead ends. You need a map. And you need a gatekeeper.

Search engines have limits. They won’t spend forever trying to parse your messy site architecture. When a bot hits a wall of duplicate content or infinite redirect loops, it leaves. It’s that simple. But you can regain control. By identifying where the leaks are, you turn a chaotic crawl into a streamlined indexing machine. Let’s get to work.

Why is Googlebot ignoring your most important pages?

Your crawl budget is finite. It’s the maximum number of pages search engines will crawl in a given timeframe. When your site is massive, bots often get stuck in low-value URL clusters before hitting your fresh content. It’s frustrating. You publish a phenomenal guide or a new product line, yet it sits unindexed for weeks.

This happens because your host load capacity is reaching its limit. Google protects your server from crashing by slowing down its crawl rate. But if it spends that limited time on junk, your ranking potential dies. And your revenue follows. You must prioritize. Every request should count.

Start by checking your Crawl Stats report in Google Search Console. Look for high percentages of Not Found (404) or Moved Permanently (301) status codes. These are red flags. They prove you’re paying a tax on errors. Clean them up now.

How can you identify crawl waste in faceted navigation?

Faceted navigation is a nightmare. It creates millions of unique URLs based on every possible filter combination you offer. A user might select blue, size medium, and under fifty dollars. That’s one URL. Switch the order, and it’s a new one. To a bot, this looks like infinite work.

You need to block these combinations. Use your robots.txt file to prevent bots from crawling unnecessary filter paths. But be careful. If you block them after they’re already indexed, you might create a zombie page problem. Use canonical tags as a secondary layer of protection.

And consider using AJAX for filters. This keeps the user experience fast without generating new URLs for every click. It’s cleaner. It’s smarter. Googlebot will thank you by spending its time elsewhere. Focus on the core categories.

Photo by Elias Gamez on Pexels Are redirect chains killing your site speed and crawl efficiency?

Redirects are sneaky. A single jump from page A to page B is fine. But when page A goes to B, then B goes to C, and C finally lands on D, you’ve created a chain. Each hop costs time. It drains the bot’s energy. Eventually, it gives up.

These chains often accumulate during site migrations or platform updates. They’re hidden crawl budget waste that most SEOs miss. You should audit your internal links to ensure they point directly to the final destination. Don’t make the bot work twice. Keep it simple.

Use tools like Screaming Frog to find these sequences. Map out the start and end points clearly. Then, update your database to bypass the middle steps. It’s a manual process. But the performance gains are massive. Your indexation rate will climb.

Which URL parameters are draining your resources?

Tracking parameters are useful for marketing. They’re terrible for technical SEO. If every newsletter link adds a unique string to the URL, Google sees thousands of versions of the same page. This is a classic case of duplicate content. It’s also a major drain.

Go to the URL Parameters tool in Search Console. Tell Google which parameters are active and which are just for tracking. But don’t rely on this alone. Use self-referencing canonicals to signal the preferred version of every page. This helps consolidate link equity.

And try to use fragments instead of parameters for non-essential data. Search engines generally ignore everything after the hash symbol. This keeps your clean URLs in the index. It protects your crawl budget. Your site becomes leaner.

Do you have a plan for managing internal 404 errors?

A 404 error is a dead end. When a bot hits one, it stops. If your site has thousands of broken links, you’re effectively telling Google to go away. It’s the most common way how to fix hidden crawl budget waste for large sites effectively. You must heal the wounds.

Fixing these improves the user journey. It also preserves the link juice flowing through your site. If the page is gone forever, use a 410 status code. This tells the bot the page is gone and won’t return. It’s more definitive than a 404.

For pages with existing traffic, redirect them to the most relevant equivalent. Don’t just dump everything onto the homepage. That’s a soft 404. Google hates those. They’re confusing. They’re wasteful. Be precise with your mapping.

Is your XML sitemap working for or against you?

Your sitemap is a priority list. If it’s full of junk, you’re giving the bot a bad itinerary. High-quality sitemaps should only include indexable URLs with 200 OK status codes. Remove redirects, 404s, and non-canonical pages immediately.

Large sites often need multiple sitemaps. Break them down by category or date. This makes it easier to spot which sections are struggling with indexation. If a sitemap for your blog has 5,000 URLs but only 200 are indexed, you have a problem. You can find the fire faster.

And keep them updated. A stale sitemap is a useless sitemap. Automate the process so new content appears instantly. But ensure your removal logic is just as fast. It keeps the bot focused. It keeps your site relevant.

How does server response time affect your crawl limit?

Fast sites get crawled more. It’s a simple relationship. If your server takes two seconds to respond, Googlebot will limit its visits. It doesn’t want to slow down your site for real users. Speed is a prerequisite for crawl budget optimization.

Check your Time to First Byte (TTFB). If it’s high, your hosting or your code is the bottleneck. Use caching layers to serve content faster. Compress your images and minify your scripts. Every millisecond you save is a potential new page crawled.

And monitor your logs. Log file analysis shows exactly what the bots are doing. It’s the truth. You’ll see if they’re hitting the same script over and over. You’ll see if they’re getting stuck on heavy assets. Fix the speed, and the volume will follow.

Audit your site for crawl efficiency today

Stop letting vanity metrics distract you from technical health. Large sites live and die by their indexation efficiency. If you don’t manage your crawl budget, the search engines will manage it for you. And you won’t like their choices. They’ll cut corners. They’ll miss your latest updates.

Take these steps to reclaim your visibility. Start with a deep audit. Identify the loops, the chains, and the dead ends. Clean your sitemaps. Block the filters. Once you remove the friction, Googlebot will glide through your site. You’ll see faster indexing. You’ll see better rankings. And you’ll finally stop wasting your most precious digital resource.

Frequently Asked Questions

- How often should I audit my crawl budget? You should perform a deep dive at least once a quarter. However, check your Google Search Console Crawl Stats weekly for sudden spikes or errors.

- Does every site need to worry about crawl budget? No. If your site has fewer than 10,000 pages, Google will likely crawl everything worth seeing. This is a strategy specifically for enterprise SEO and large e-commerce platforms.

- Can I use Noindex to save crawl budget? Not effectively. A noindex tag still requires the bot to crawl the page to see the tag. To save budget, you must block the crawl entirely via robots.txt.

- Will improving my site speed really increase my crawl rate? Yes. Google has confirmed that faster server response times allow their bots to request more URLs without taxing your system. It’s a direct coralation.

-

GEO Impact on Search Rankings: Strategies for 2026

Your website is a lonely island without a map if AI engines can’t find it. Generative Engine Optimization is the compass that guides these digital explorers straight to your content. It’s not enough to be seen. You must be cited as the definitive source. Impact of generative engine optimization on search rankings determines whether you’re a footnote or the main attraction.

How does GEO change the way search results are calculated?

Algorithms used to count blue links. Now they synthesize massive datasets to build custom answers for every user. Traditional search engines measured authority through backlinks alone. AI engines prioritize retrieval probability and the likelihood that your content answers a specific prompt. It’s a fundamental shift. You aren’t just ranking for keywords. You’re competing to be the most relevant data point in a generated summary.

Recent data indicates that visibility in AI search environments like Perplexity or Gemini happens much faster than traditional Google rankings. While SEO takes months, optimizations for AI can show results in weeks. This is a massive opportunity for new brands. They can bypass older, entrenched competitors by providing clearer, more structured data. Speed matters here. If your content is easy for a machine to parse, it gets synthesized first.

Focus on high-density information. Cut the fluff. Use clear headers and concise definitions. Impact of generative engine optimization on search rankings becomes visible when your site is cited as a source in an AI-generated paragraph. This drives highly qualified traffic to your pages. These users arrive ready to act.

Why are traditional SEO tactics failing in the AI search era?

Keywords are losing their crown. AI systems understand intent and semantic context far better than the search engines of five years ago. Rigid keyword stuffing makes your site look like junk to a sophisticated large language model. It ignores the nuance. It misses the point. You must write for the logic of the machine and the heart of the human.

Content that is too broad often gets ignored. AI engines look for authoritative depth and niche expertise that they can reliably summarize. If your page is a mile wide and an inch deep, it won’t be picked up. Google’s Search Generative Experience prefers specific citations. It wants data. It wants facts. It wants evidence.

- Remove passive voice to make your claims clearer for AI parsers.

- Include specific statistics that are easy for models to extract.

- Use schema markup to provide a technical roadmap for crawlers.

Photo by HONG SON on Pexels What is the measurable impact of generative engine optimization on search rankings?

Numbers don’t lie. Sites that adopt GEO strategies see a marked increase in organic visibility specifically within synthesized search summaries. This doesn’t always mean your position in a list of links goes up. It means your brand appears in the box at the very top of the page. That box is where the attention lives now. It’s the new page one.

Research from early 2026 shows that the impact of generative engine optimization on search rankings correlates with a 40 percent increase in brand mentions. When an AI cites you, users trust you more. They see you as the expert selected by the machine. It is a powerful form of social proof. Your site becomes the primary source.

The impact of generative engine optimization on search rankings is also seen in the quality of your traffic. Users who click through from an AI summary have already been vetted. They know what they want. They know you have it. Conversion rates for these visitors are significantly higher than standard search traffic.

How do you structure content to satisfy AI search agents?

Formatting is your secret weapon. AI models love lists, tables, and clear hierarchies because they are easy to ingest. If a model can’t figure out your main point in two seconds, it moves on. It’s ruthless. It’s efficient. It’s hungry for structure.

Use the inverted pyramid style of writing. Put the most important information in the first sentence of every paragraph. Follow it with supporting details. Keep it tight. This structure allows AI to clip your text directly into its responses. You become the direct answer. This is the goal.

- Define your primary topic clearly in the first 100 words.

- Use bulleted lists for all process-oriented content.

- Cite reputable sources to build a network of trust.

Can citations and brand mentions outweigh traditional backlinks?

Links still matter, but citations are the new currency. Generative Engine Optimization relies on being mentioned in a relevant context across the web. If AI sees your brand mentioned on Reddit, LinkedIn, and news sites, it recognizes you as an authority. It doesn’t always need a hyperlinked URL to connect the dots. It understands your identity.

This is where digital PR and GEO intersect perfectly. You need to be talked about in the places where your audience hangs out. AI scrapers are everywhere. They see the conversations. They catalog the sentiment. They remember who the experts are.

Maximize your footprint. Don’t just post on your own blog. Share insights on platforms that AI engines prioritize for real-time data. This includes social networks and technical forums. The more places you exist, the more likely you are to be synthesized. Your presence is your power.

What role does semantic density play in modern search rankings?

Stop worrying about keyword frequency. Start focusing on conceptual coverage. Semantic density means you are covering all the related topics and questions a user might have. If you’re writing about solar panels, you must also talk about inverters, battery storage, and tax credits. The AI looks for the whole picture. It wants the full story.

When you provide a comprehensive answer, the impact of generative engine optimization on search rankings is undeniable. You become the go-to resource for that entire cluster of topics. This creates a halo effect. One strong page can lift the rankings of your entire domain. It’s a chain reaction.

Analyze your competitors’ AI citations. See what specific facts the engines are pulling from their sites. Then, provide better facts. Provide more recent data. Be the source that is too good to ignore. This is how you win.

How should businesses adapt their strategy for 2026 and beyond?

Stop chasing the algorithm of yesterday. The impact of generative engine optimization on search rankings is only going to grow as AI becomes the primary interface for the internet. If you’re not optimizing for the machine’s ability to understand you, you’re invisible. You’re gone. You’re forgotten.

Invest in original research. AI loves data that it hasn’t seen a million times before. If you publish a unique study, you become the primary source for every AI engine. They have to cite you. There is no other option. This is the ultimate leverage.

Audit your content for clarity. Get rid of the jargon that confuses both humans and machines. Use plain language to explain complex ideas. If a middle-schooler can understand it, an AI can summarize it. Clarity is the ultimate SEO hack. Keep it simple.

Future Proof Your Digital Presence Today

The window of opportunity is wide open but it won’t stay that way forever. Generative Engine Optimization is shifting from a competitive advantage to a basic requirement for survival. You must act now. You must adapt. You must evolve.

Start by identifying your most important pages. Update them with structured data and punchy, fact-heavy sentences. Monitor how AI engines respond to these changes. Adjust your tactics based on what gets cited. It is an iterative process. It requires constant attention.

The impact of generative engine optimization on search rankings is the difference between a thriving business and a ghost town. Don’t let your content get buried in the archives of the old web. Build for the future of search. Build for the engines of tomorrow. Your success depends on it.

Common Questions Regarding AI Search Performance

- What is the difference between SEO and GEO?

SEO focuses on ranking high in traditional search engine results pages through links and keywords. GEO focuses on making content easy for AI engines to retrieve, summarize, and cite in generated answers. - Will GEO replace traditional SEO?

No, they work together. A strong SEO foundation provides the authority and technical health needed for GEO tactics to be effective in AI-driven environments. - How can I track my performance in AI search?

Use tools that monitor brand mentions in AI summaries and track referral traffic from platforms like Perplexity, ChatGPT, and Google Gemini. - Does content length matter for AI optimization?

Length is less important than information density. AI prefers content that provides a direct, accurate answer regardless of whether it is 500 or 2000 words long.

-

The Shift Toward Intelligent Property Analysis

Your inbox is overflowing with leads you can’t possibly call in time. Three years ago, agents spent hours manually scrubbing data and drafting property descriptions that nobody read. Today, top performers use AI for real estate to automate their entire workflow and close deals while they sleep. This technology isn’t a futuristic dream anymore. It’s the standard for anyone who wants to survive in a market where speed is the only currency that matters. You’re either using these tools to scale, or you’re watching your competitors take your market share. The gap is widening fast.

The Shift Toward Intelligent Property Analysis

Valuation used to be a guessing game based on outdated comps. You’d look at a house down the street, add a few thousand for a renovated kitchen, and hope for the best. Now, artificial intelligence in real estate has changed the math entirely. Machine learning models analyze thousands of data points in seconds. They look at local crime rates, school district shifts, and even the proximity to the newest coffee shops. This level of detail gives you a massive advantage during listing presentations. You aren’t just giving an opinion. You’re providing a data-backed forecast that builds instant trust with your clients.

Investors are seeing the biggest gains here. Predictive analytics can pinpoint which neighborhoods are about to gentrify before the first renovation permit is even filed. By processing historical price trends and current economic indicators, AI identifies “buy” signals that human eyes would miss. You can’t manually track every zoning change or infrastructure project in a city. But a well-trained algorithm can. It filters the noise and hands you the signal. This means less risk for your portfolio and higher returns for your investors.

Hyperlocal Valuation Models

Standard appraisal methods are too slow for March 2026. Buyers want answers now. AI-driven valuation models provide real-time updates based on live market fluctuations. If a major employer announces a new headquarters, the software adjusts property values across the zip code instantly. This allows you to price homes with surgical precision. You won’t leave money on the table. And you won’t let a listing sit for months because it was overpriced. Accuracy is the new competitive edge.

These models also account for “soft” data. They scan social media sentiment about specific neighborhoods. They track foot traffic patterns using anonymized mobile data. This creates a 360-degree view of a property’s true worth. It’s not just about square footage anymore. It’s about the lifestyle value of the location. You can explain this to your sellers with colorful charts and hard evidence. They’ll appreciate the transparency. You’ll appreciate the faster commission check.

Automating Lead Generation and Nurturing

Lead follow-up is where most agents fail. It’s hard to stay consistent when you’re out showing houses all day. AI for real estate agents solves this by acting as a 24/7 digital assistant. Conversational AI bots can qualify leads on your website at 3:00 AM. They ask about budget, timeline, and preferred locations. By the time you wake up, your calendar is full of appointments with people who are actually ready to buy. You stop wasting time on “lookers” and focus on “closers.”

Nurturing is just as important as the initial contact. Most leads take months to convert. AI tools track user behavior on your site to send perfectly timed emails. If a lead looks at three condos in a specific building, the system sends them a market report for that exact complex. It feels personal. It feels like you’re paying attention. But you didn’t have to lift a finger. This level of automation keeps you top-of-mind without the burnout of manual outreach.

Predictive Lead Scoring

Not all leads are created equal. Some are just browsing while they eat lunch. Others have a pre-approval letter and a moving truck scheduled. AI analyzes lead behavior to assign a “probability to close” score. High-scoring leads get pushed to the top of your CRM. You know exactly who to call first every morning. This increases your conversion rate because you’re talking to the right people at the right time. Efficiency is the key to scaling your business without adding more staff.

The system also identifies “likely sellers” in your existing database. It looks for life events like marriages, divorces, or job changes through public records. When the algorithm flags a contact, it’s time to reach out. You can offer a free home equity report before they even think about calling another agent. This proactive approach wins listings. It turns your old database into a gold mine. You’ve already paid for these leads, so you might as well extract every bit of value from them.

Revolutionizing the Property Search Experience

House hunting used to be a chore. Buyers spent hours scrolling through endless lists of homes that didn’t fit their needs. AI for real estate has turned this into a curated experience. Modern search engines use “computer vision” to understand what’s in a photo. If a buyer likes mid-century modern kitchens, the AI finds every home with that specific aesthetic. It doesn’t rely on tags or descriptions. It sees what the buyer sees. This creates a “Netflix-style” recommendation engine for homes.

This tech also helps with “virtual staging” on the fly. A buyer can look at a vacant room through their phone and see it fully furnished in their preferred style. They can swap out the flooring or change the paint colors instantly. This helps them visualize the potential of a space. It removes the “imagination gap” that often kills deals. When a buyer can see themselves living in a house, they’re much more likely to make an offer. You’re selling a dream, not just four walls and a roof.

AI-Powered Virtual Tours

Static photos are no longer enough to grab attention. High-end buyers expect immersive experiences. AI can now generate 3D walkthroughs from a few 2D smartphone photos. You don’t need expensive camera gear or professional photographers for every listing. The software stitches the images together and creates a navigable space. It’s fast. It’s cheap. And it’s incredibly effective for out-of-state buyers who can’t visit in person.

These tours can even include “AI tour guides.” An interactive avatar can walk the buyer through the home and answer questions. It can cite the age of the roof or the brand of the appliances. This provides a consistent sales pitch every single time. It’s like having your best showing assistant available at all hours. Buyers feel empowered to explore at their own pace. You get more qualified second showings because the “looky-loos” have already filtered themselves out.

Streamlining Operations and Risk Management

The back-office work of real estate is a mountain of paperwork. Contracts, disclosures, and inspection reports take up way too much time. AI for real estate tools can now read and summarize these documents in seconds. They flag missing signatures or inconsistent dates before they cause a delay. This reduces the risk of legal trouble and keeps your transactions on track. You can spend more time negotiating and less time squinting at fine print.

Risk management is another huge benefit. AI can scan titles and public records for “red flags” that might complicate a sale. It looks for liens, easements, or historical disputes that a human might overlook. Finding these issues early saves everyone’s time. It prevents deals from falling through at the closing table. Your reputation stays intact. Your clients stay happy. And your stress levels stay manageable.

Smart Contract Management

Managing multiple deals at once is a logistical nightmare. One missed deadline can cost your client thousands of dollars. AI-driven platforms track every milestone in the escrow process. They send automated reminders to lenders, inspectors, and title companies. If a task isn’t completed, the system escalates the alert. You stay in control without having to micromanage every vendor. It’s like having a project manager who never sleeps.

These tools also help with compliance. They ensure every document meets state and local regulations. As laws change, the AI updates its checklists automatically. You don’t have to worry about falling out of compliance when a new disclosure form is released. The tech handles the boring stuff so you can focus on the human side of the business. Real estate is still a relationship business. AI just gives you the room to breathe and build those connections.

Enhancing Marketing with Generative Content

Marketing is a non-stop treadmill. You need social media posts, blog articles, and property descriptions every single day. AI for real estate makes content creation effortless. You can feed a few bullet points about a house into a generator and get a professional listing description in seconds. It can write different versions for Instagram, LinkedIn, and the MLS. This ensures your branding is consistent across all platforms. You look like a marketing genius without the high agency fees.

Video marketing is the next frontier. AI tools can take your property photos and turn them into cinematic video ads with voiceovers. You don’t need to learn video editing software. You just upload the assets and let the algorithm do the work. These videos get much higher engagement than static posts. They stop the scroll. They get shared. And they lead to more inquiries. In 2026, if you aren’t doing video, you’re invisible. AI makes it possible for every agent to be a video star.

Personalized Email Campaigns

Generic newsletters go straight to the trash. People only want information that’s relevant to them. AI segments your email list based on interests and behavior. It sends market updates to sellers and mortgage tips to first-time buyers. It even optimizes the “send time” for each individual recipient. This means your emails land at the top of their inbox when they’re most likely to read them. Your open rates will soar.